"This is not your grandfather’s DLP or machine learning DLP."

Speaking in a keynote presentation at Zscaler’s Zenith Live EMEA event in Prague, Venkat Krishnamoorthi, senior director of product management at Zscaler said that AI can boost productivity, and so “actually uniting AI and people together can propel your business forward.”

He acknowledged that there is a “productivity paradox” when it comes to connecting data, AI and people, “as the productivity absolutely goes up, however, the risk of data loss also goes up at exactly the same time.”

He said: “So we all understand that the cost of a breach can be significant, right? We're in a regulated industry, but even for those of us that are non-regulated Industries, particularly, there is a significant cost of a data breach, and in fact a significant cost, whether it in dollars or brand impact.”

Oversharing Access

He pointed at the various ways in which data can be lost: GenAI apps accessing data; tools like Copilot oversharing data; copying sensitive data to USB drives; data shared from OneDrive or a shared link that anybody can access; or sending emails with sensitive data.

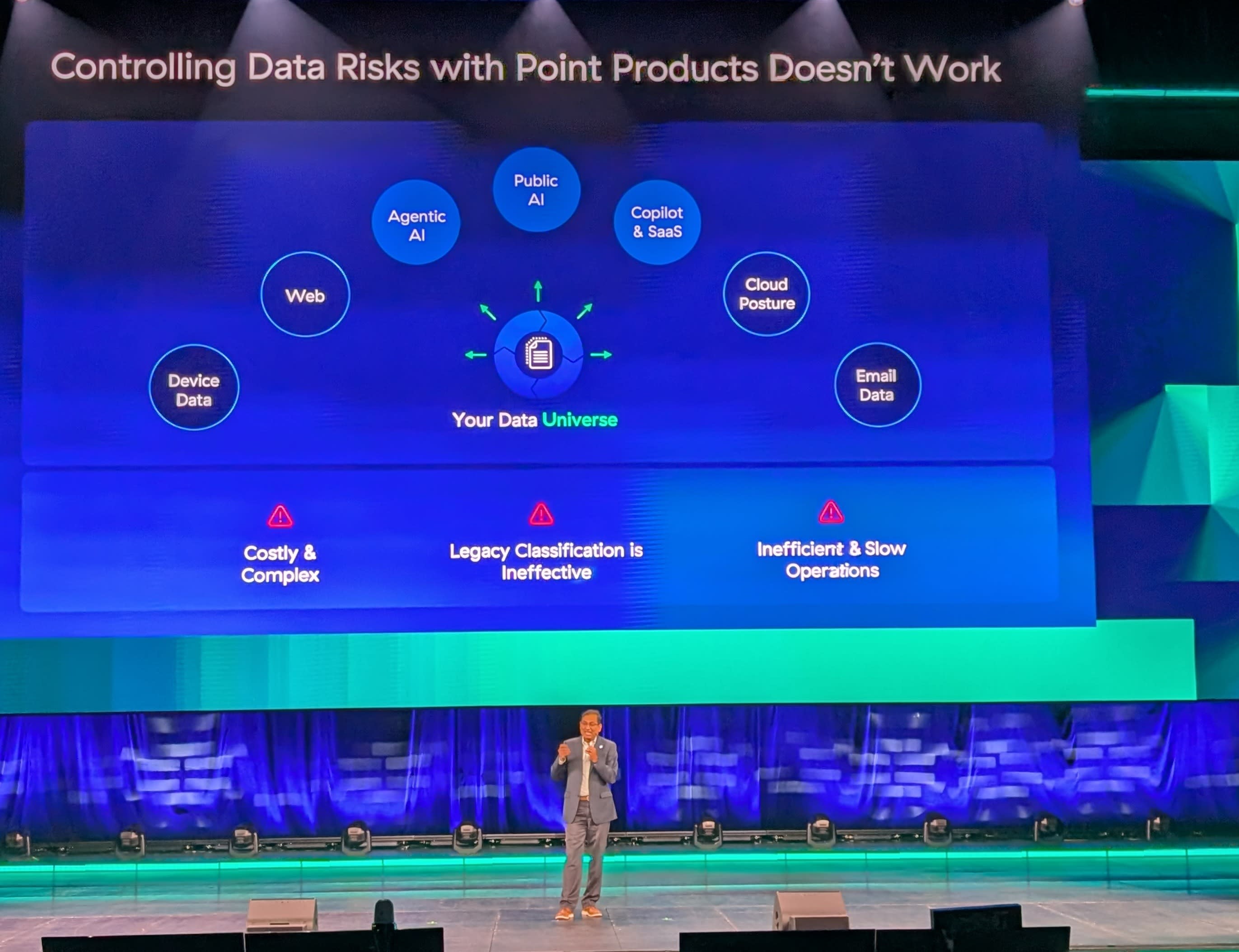

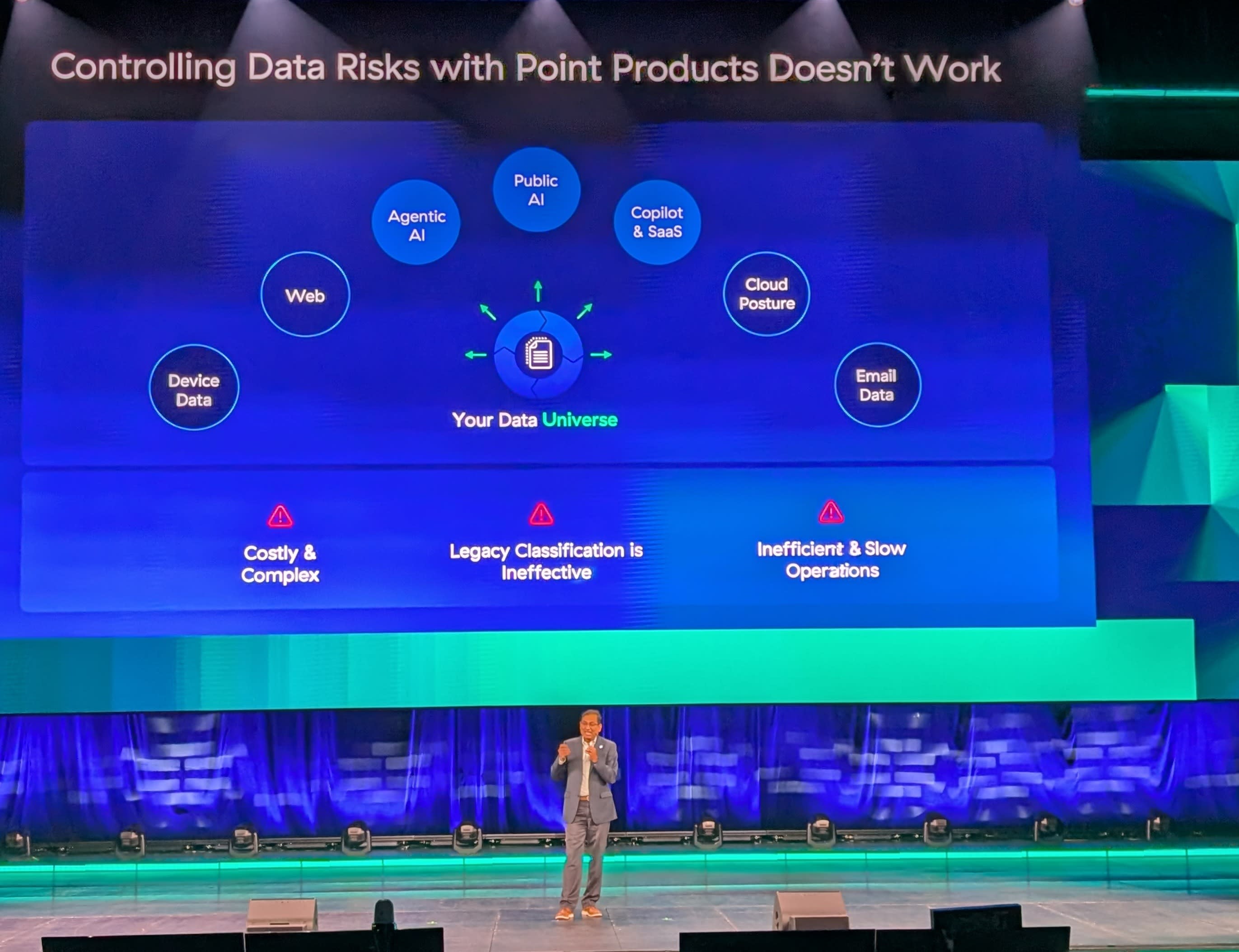

“The industry has responded to this over the last 10-15 years by providing you a whole bunch of point products that can actually give you some sort of control over these issues,” he said.

“So whether it be endpoint DLP products, email DLP products, or CASB. The problem with having all of these single point solutions is you want to have multiple of those in your environment that can make it costly and complex. Because you have so many of these products running, the operations are inefficient as well.”

Inefficient Classification

Krishnamoorthi said the root cause of all of this is what is ‘inefficient classification’ as you have multiple products taking the same data and classifying that very same data in different ways. “So that obviously leads to inefficient operations, and so on.”

As for the antidote to this classification issue, Krishnamoorthi said a human can make a decision on whether a document is sensitive and should not be sent out, so the power of LLM should be harnessed to “determine the same human level of intuition.”

He said: “This is not your grandfather’s DLP or machine learning DLP, this is real, true, GenAI-based, LLM-based AI classification.

“Imagine being able to use that kind of LLM technology for real-time data transfer, as the data is flying through your Zscaler proxy as a user is uploading. This is real time data security, reimagine the way you can do DLP with elements - that's the bottom line here.

He concluded by saying that C-level executives are pressuring security folks to enable AI, but security folks worry about security, so this is a way to provide that security that tells you these are the AI applications your users are using, and actually offer controls for that.

Written by

Dan Raywood is a B2B journalist with 25 years of experience, including covering cybersecurity for the past 17 years. He has extensively covered topics from Advanced Persistent Threats and nation-state hackers to major data breaches and regulatory changes.

He has spoken at events including 44CON, Infosecurity Europe, RANT Forum, BSides Scotland, Steelcon and the National Cyber Security Show, and served as editor of SC Media UK, Infosecurity Magazine and IT Security Guru. He was also an analyst with 451 Research and a product marketing lead at Tenable.